Pankraz Piktograph

2021

created with Joris Wegner

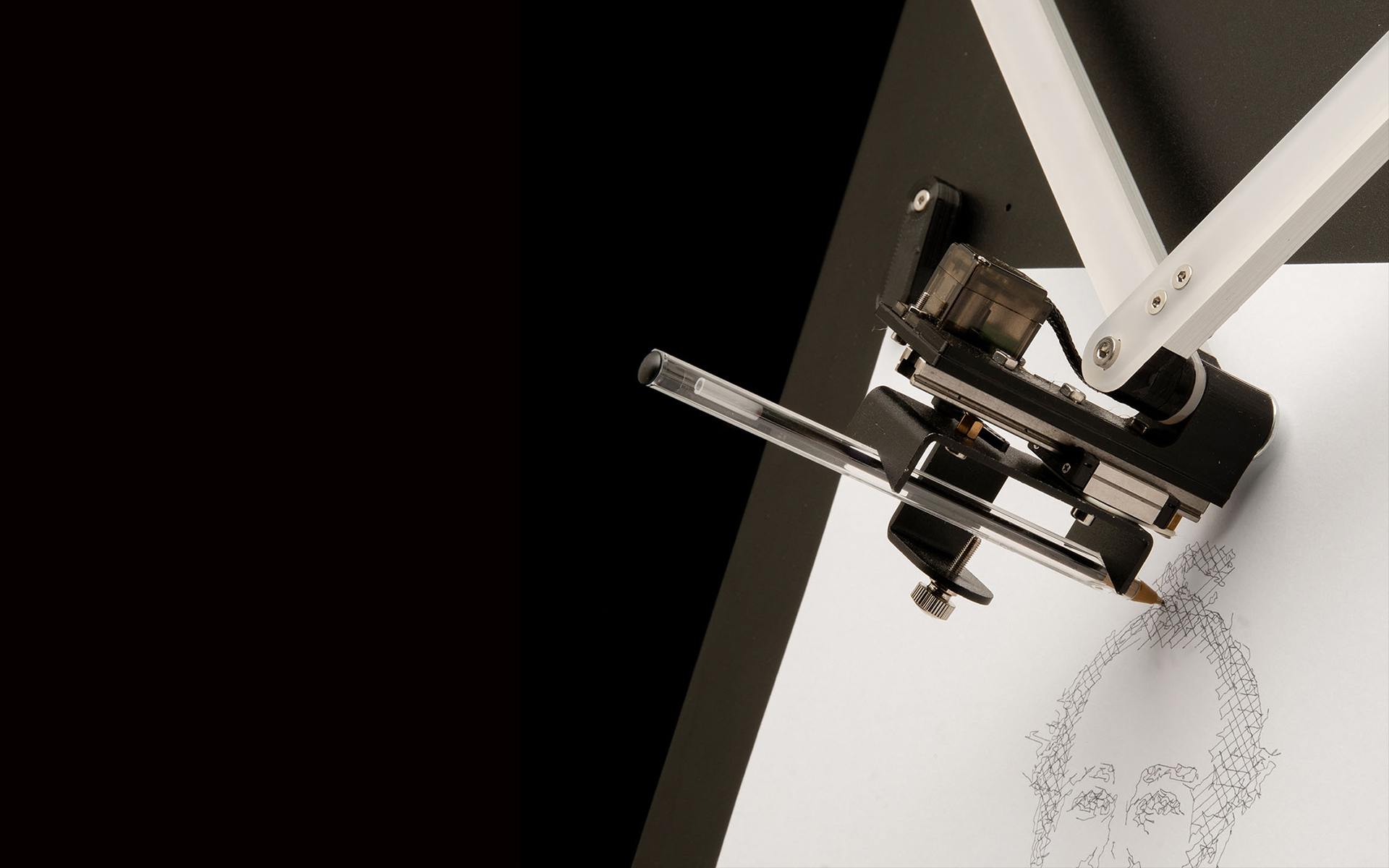

Pankraz Piktograph is a self contained portrait drawing robot and is also our Master Thesis. Inspired by historical drawing automatons like Maillardet’s Automaton and recent examples like Patrick Tresset’s Paul the Robot, we decided to build a robot that draws portraits of bystanders during events like science or trade fairs, in museums or other kind of events. Instead of providing visitors with regular photos as a souvenir like commercial selfie boxes or photo booths do, our robot makes the creation of the souvenir image an event in itself.

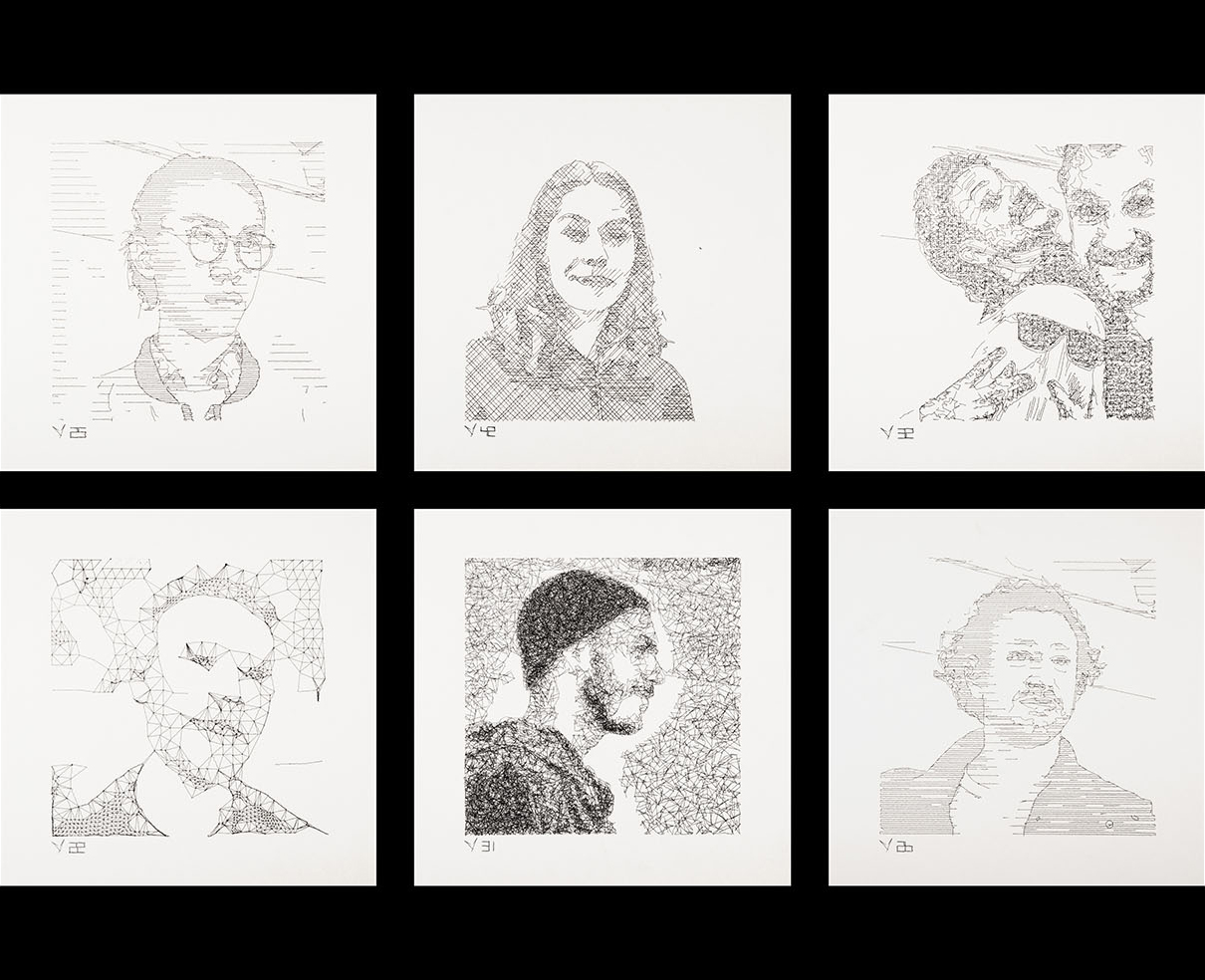

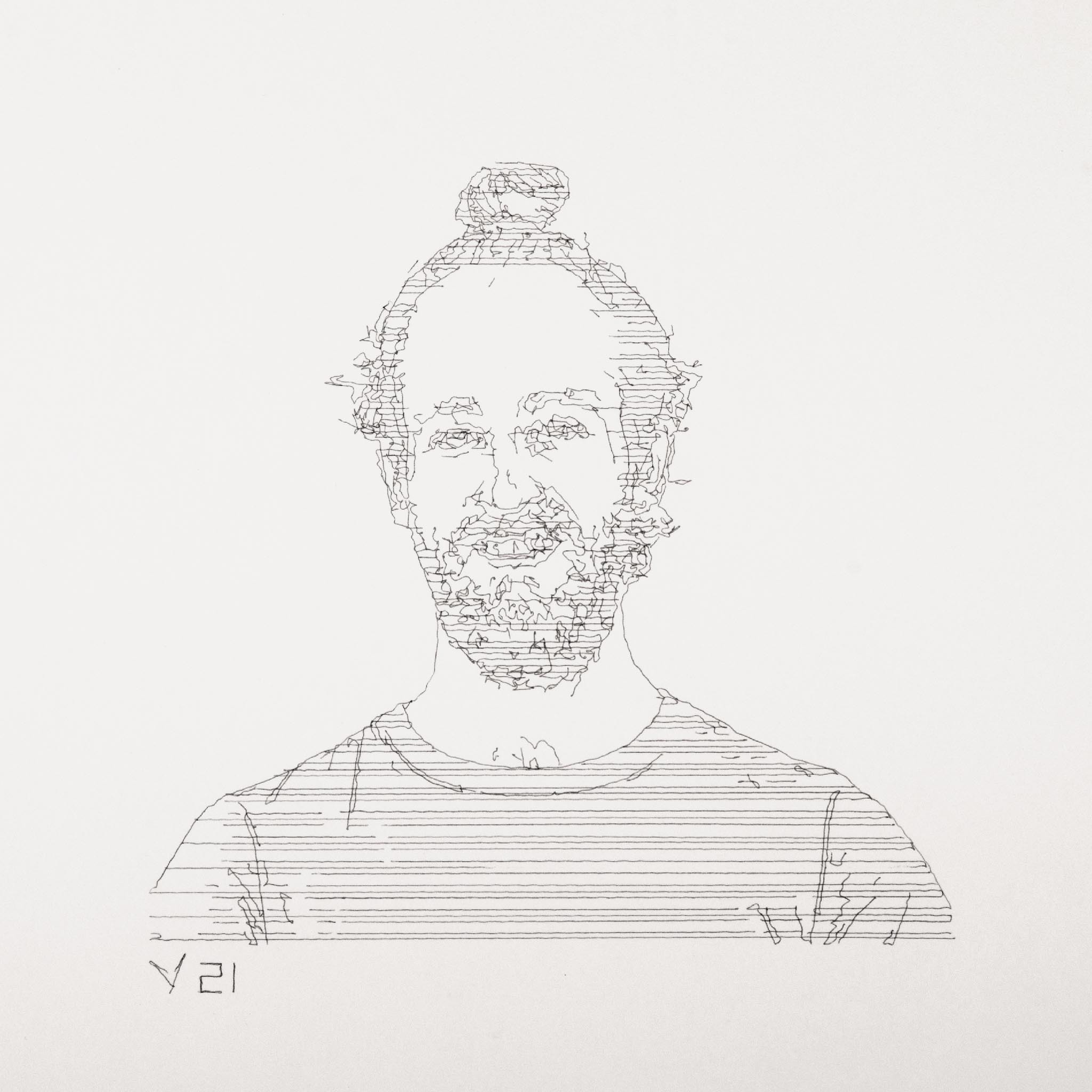

The robot takes a portrait of its user at the press of a button on a wired remote. The resulting image is then stylized and translated into a vector representation, which can be drawn by the automaton. The Pankraz Piktograph masters various drawing styles, from fast minimalist line drawings over abstract representations to finely rendered portraits. Every drawing is an exclusive and unique artwork and is marked with a serial number.

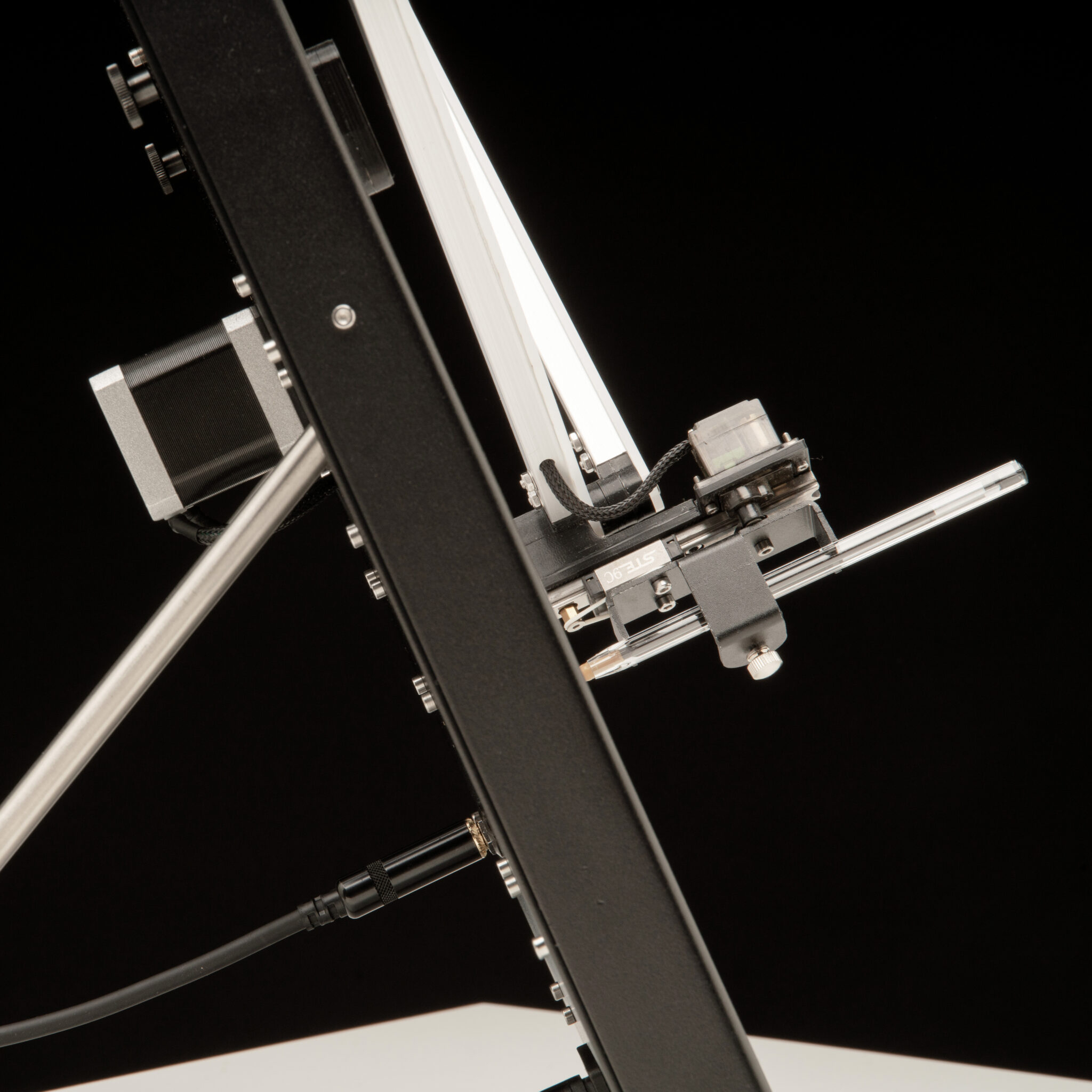

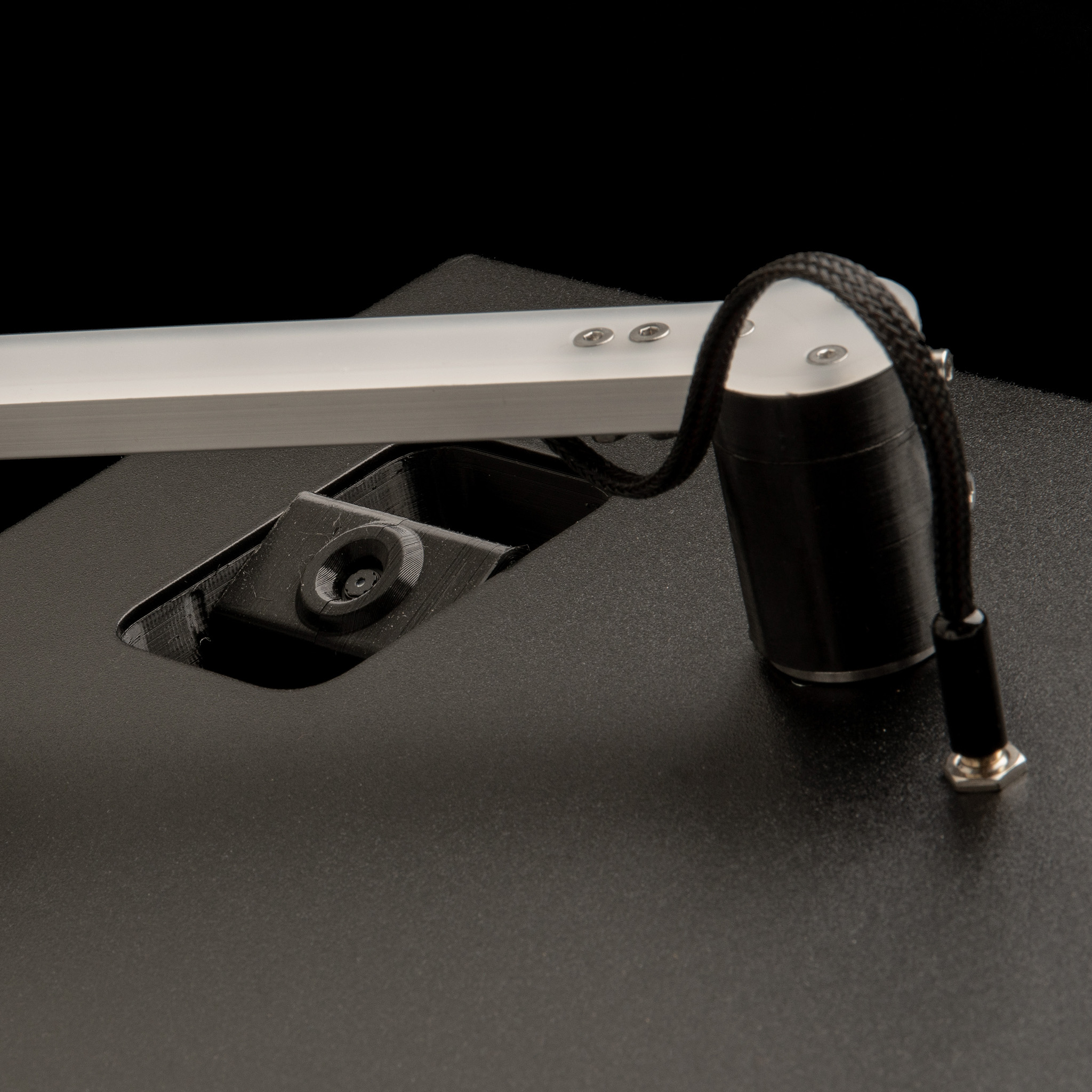

hardware

A Raspberry Pi 3 is the heart of the drawing robot. It is in charge of driving the 3.5" display for showing instruction pictograms, as well as previewing the image of the integrated camera. After the user takes a picture using the wired remote control, and accepts it as a template for the drawing process, the Pi generates a vector-based graphic from the given image. The resulting vertices are transmitted to a Teensy, which then controls the actuators of the machine. Each arm is moved by a stepper motor via a one-to-five pulley transmission. This helps to increase the torque as well as the resolution of the movements. We decided to go for an open control loop, thus light barrier sensors at each shoulder joint are used for calibration and determining absolute positions of the arms. Afterwards, the arms can be positioned precisely by keeping track of the motor’s steps. The pen is actuated by a small servo motor. To provide sufficient contact between pen and paper, but also to make the pen compliant to slight irregularities of the drawing surface, it is spring-loaded.

software

We decided to use openFrameworks as a toolkit for driving the interface, as well as handling the image processing. Besides the seamless integration of OpenCV, of which we made heavy use for the conversion of the images, it also provides a whole lot of convenient methods for working with vectors. The simple implementation of the serial interface, and also the ability of using multithreading came in handy as well.

To transform a normal photo into a drawable format for our automaton, several steps must be taken. We can roughly divide these steps into the following categories: Preprocessing, vectorization and postprocessing.

The preprocessing phase conditions the image in a way that only the necessary content for the vectorization remains and significant edges are emphasized by adjusting the contrast. For vectorizing the image we applied the well-known canny edge detector which returns a binary image holding most of the significant edges. Over the course of our experiments, we realized that we can improve the edges by applying the canny algorithm to several intermediate states from the preprocessing phase, and combining the results into one edge-image. For getting more depth in our resulting drawings, a way of representing different brightness levels had to be found. Therefore, we conducted a series of experiments, all utilizing a two-bit quantized version of the preprocessed image as a base for different filling methods. Finally we ended up with seven different styles, all the nifty details can be found in the thesis, which can be downloaded below.